The Capital Cycle of Compute

Artificial intelligence is often described as a software revolution. Progress is measured through model architectures, benchmark scores, and the emergence of new applications powered by machine learning systems. Each generation of models appears to advance the frontier through algorithmic improvement.

But this narrative captures only part of the story.

Behind modern AI sits a rapidly expanding industrial system designed to produce compute at unprecedented scale. Massive GPU clusters, hyperscale data centers, specialized networking fabrics, and enormous electricity supply have become the physical foundation of machine intelligence. What appears on the surface as a software breakthrough is, in reality, supported by a deep and capital-intensive infrastructure stack.

The development of artificial intelligence is increasingly shaped not only by algorithms, but by capital cycles.

Understanding this dynamic requires shifting perspective from AI models to the infrastructure that makes them possible.

Compute Becomes Infrastructure

For most of the modern software era, compute behaved like an elastic utility. Developers provisioned servers in the cloud, scaled applications dynamically, and paid only for what they consumed. The infrastructure existed, but it remained largely abstracted away from the user.

Large-scale AI has fundamentally changed this relationship.

Training frontier models now requires thousands — often tens of thousands — of GPUs operating in coordinated clusters. These chips must be connected through high-bandwidth networking systems capable of moving enormous volumes of data with extremely low latency. The clusters themselves require specialized facilities capable of delivering immense power density and advanced cooling systems.

This infrastructure cannot be deployed instantly. It requires long planning horizons, specialized engineering, and massive capital investment.

In effect, compute has transitioned from a software abstraction into a form of industrial infrastructure.

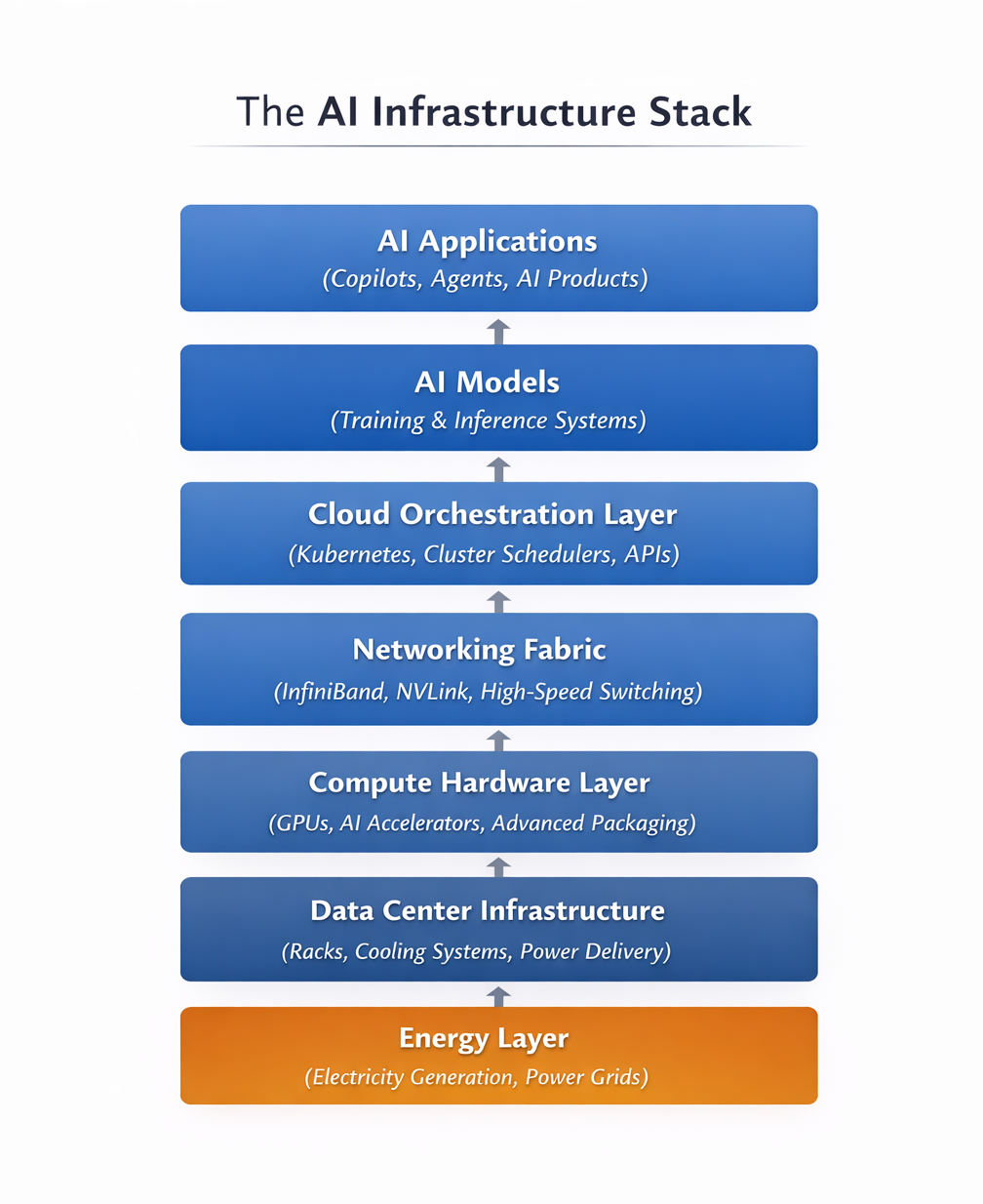

The AI Infrastructure Stack

Modern AI systems sit at the top of a deep stack of infrastructure layers.

At the base lies energy. Electricity generation and grid capacity determine how much power can be delivered to large computing facilities. As compute clusters grow in size and density, energy availability increasingly becomes a gating constraint.

Above this layer sits the physical data center infrastructure: the buildings, cooling systems, rack architectures, and power delivery systems that house compute hardware. Modern AI data centers are designed around power densities that would have been unusual even a decade ago.

Within these facilities operate the compute clusters themselves. GPUs and AI accelerators perform the matrix operations required for training and inference, while high-performance networking technologies connect thousands of processors into a single distributed system.

On top of the hardware stack sits the orchestration layer. Software systems schedule workloads, allocate compute resources, and expose infrastructure through cloud platforms and APIs.

Only at the very top of this stack do we encounter the AI models and applications that capture public attention.

Figure: The AI Infrastructure Stack

Artificial intelligence systems sit on top of a deep physical infrastructure stack. Electricity powers data centers, which house compute hardware connected through high-speed networking. Cloud orchestration software converts this hardware into usable compute, enabling the training and operation of large AI models.

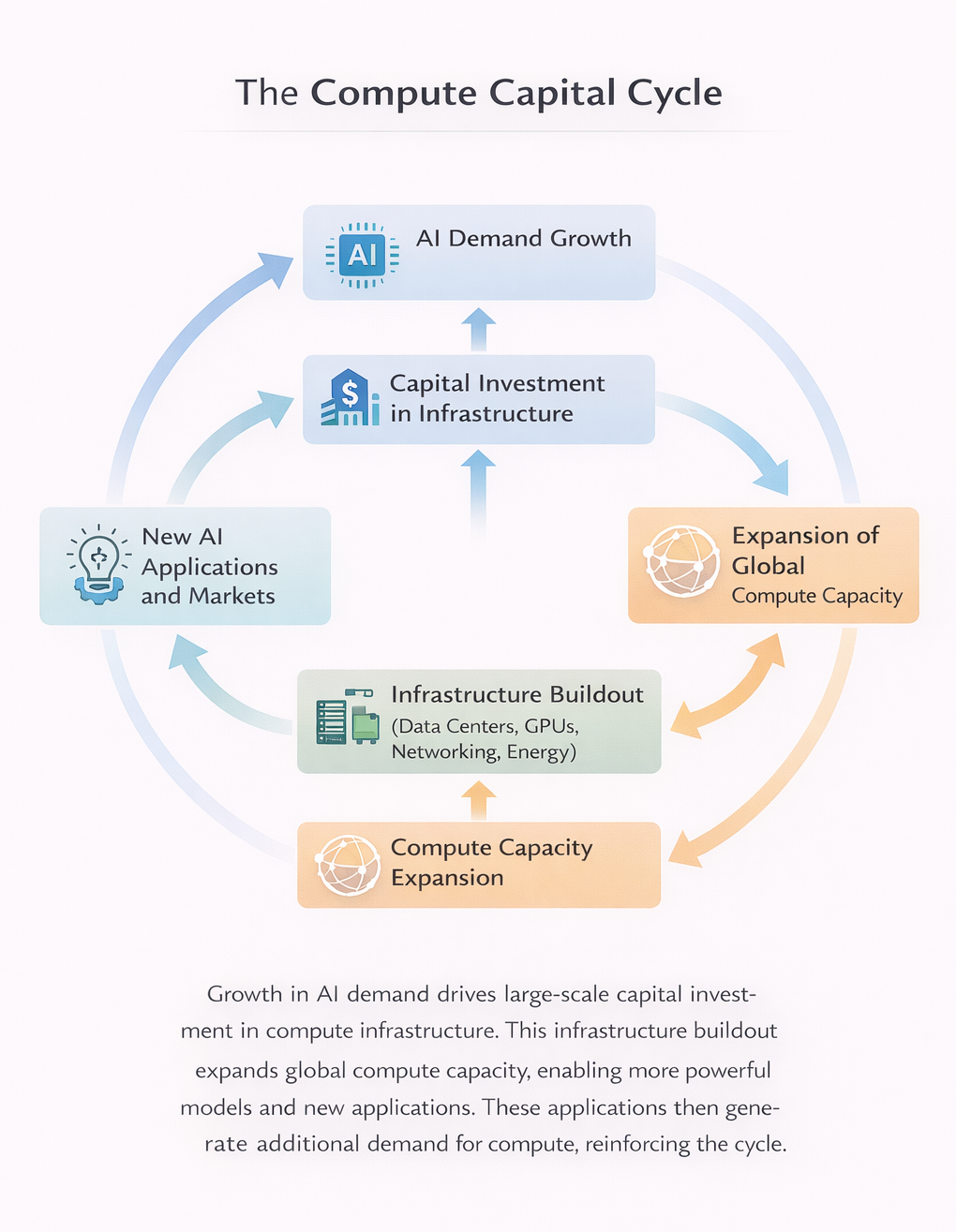

The Capital Cycle Dynamic

Industries built on heavy infrastructure tend to follow recognizable capital cycles.

When demand for a resource increases rapidly, companies deploy large amounts of capital to expand production capacity. Because infrastructure takes time to build, supply initially remains constrained. Early suppliers often capture unusually strong economics during this phase.

Over time, however, new capacity enters the system. Supply gradually catches up with demand, and economic returns normalize as competition increases.

Compute is now entering this type of cycle.

The rapid growth of AI workloads has triggered one of the largest infrastructure expansions in the history of the technology industry. Hyperscale cloud providers are investing tens of billions of dollars into new data centers, GPU clusters, and networking systems. Semiconductor manufacturers are scaling production of advanced accelerators, while utilities and power providers prepare for dramatically higher electricity demand.

Despite this surge of investment, supply remains constrained. GPU availability is limited, power infrastructure is under strain in several regions, and new data center construction continues to lag demand.

These conditions are typical of the early phase of a capital cycle.

Figure: The Compute Capital Cycle

Growth in AI demand drives large-scale capital investment in compute infrastructure. This infrastructure expands global compute capacity, enabling more powerful models and new applications. These applications then generate additional demand for compute, reinforcing the cycle.

The Compute Arms Race

The largest technology companies have recognized that compute capacity will shape the competitive landscape of AI.

Companies such as Microsoft, Amazon, Google, and Meta are deploying infrastructure investment at a scale rarely seen in the software industry. Their capital expenditures increasingly resemble those of industrial companies rather than traditional technology firms.

This spending is not simply about supporting internal workloads.It is about controlling the global supply of compute.

Owning large-scale compute infrastructure creates structural advantages. It lowers the cost of training models, allows companies to operate clusters beyond the reach of smaller competitors, and provides the foundation for cloud platforms that distribute AI capabilities across entire ecosystems.

As AI adoption grows, compute becomes a strategic resource.

The companies that control compute capacity gain the ability to shape the pace and direction of AI development.

The Emerging Bottleneck

As compute infrastructure expands, a new constraint is beginning to emerge beneath the surface of the system.

Energy.

Large AI facilities now consume enormous quantities of electricity. Training clusters can draw power comparable to small industrial installations, while global inference workloads operate continuously across cloud platforms.

As companies deploy larger and denser compute systems, access to power increasingly determines where new infrastructure can be built.

In some regions, grid capacity has already become a limiting factor for data center expansion. Securing reliable electricity supply and building the infrastructure required to deliver it is becoming an essential part of the AI ecosystem.

The future trajectory of artificial intelligence may therefore depend not only on advances in chips or algorithms, but on the physical energy systems that support compute.

Where the Economics Accrue

Capital cycles also determine where profits accumulate within an industry.

In the early phases of infrastructure expansion, suppliers of critical components often capture outsized returns. Semiconductor designers, advanced manufacturing facilities, networking providers, and data center equipment manufacturers all participate in the build-out of the compute ecosystem.

Cloud platforms occupy another strategically important position. By aggregating compute resources and exposing them as programmable infrastructure, hyperscalers transform physical assets into scalable services.

As the AI infrastructure stack expands, value creation spreads across multiple layers of the system. Some companies capture economics through hardware innovation, others through infrastructure ownership, and still others through the platforms that convert raw compute into accessible services.

Understanding these dynamics requires looking beyond AI models themselves and examining the industrial system that supports them.

Compute as the Industrial Base of AI

The rise of artificial intelligence is often described as a revolution in software. But the deeper transformation lies in the infrastructure required to produce intelligence at scale.

Training and operating advanced AI systems now depends on massive compute clusters, specialized data centers, high-performance networking, and reliable energy supply. These systems require enormous capital investment and long construction cycles.

In other words, AI is becoming an infrastructure industry.

Like other infrastructure systems before it, the development of compute will follow capital cycles defined by investment booms, capacity expansion, and shifting economic returns across the technology stack.

The future of artificial intelligence will not be determined solely by breakthroughs in research.

It will also be shaped by the infrastructure — and the capital allocation decisions — that make large-scale machine intelligence possible.