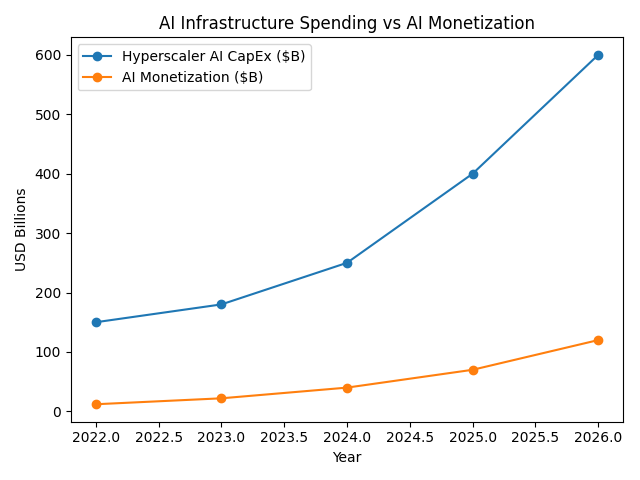

In the early stages of the AI economy, infrastructure spending is expanding far faster than the revenue generated by AI applications.

AI CapEx vs AI Monetization

The dominant narrative around artificial intelligence focuses on capability. Models are improving, benchmarks are rising, and new applications appear almost weekly. The discussion often centers on which company has the best model, the most useful product, or the fastest path to consumer adoption.

But beneath this narrative lies a more important dynamic shaping the AI economy.

The current phase of artificial intelligence is defined not primarily by applications, but by capital expenditure.

Across the technology industry, companies are committing hundreds of billions of dollars to build the infrastructure required to train and run AI systems. Data centers are expanding, GPU clusters are scaling to unprecedented sizes, and energy infrastructure is becoming a central constraint in the deployment of machine intelligence.

At the same time, the revenue generated by AI applications remains relatively modest compared to the scale of the investment.

This creates a structural imbalance: AI infrastructure is expanding far faster than AI monetization.

Understanding this gap is essential to understanding how the AI industry will evolve.

The Infrastructure Phase of AI

Large technology companies are currently engaged in one of the largest infrastructure build-outs in the history of the computing industry.

Hyperscalers are committing extraordinary levels of capital to expand their AI capacity. Data centers designed specifically for AI workloads are being constructed around the world, filled with thousands of specialized GPUs connected through high-bandwidth networking fabrics.

Unlike previous software cycles, scaling AI capability requires enormous physical infrastructure. Training large models demands massive compute clusters, and operating those models at global scale requires even more capacity.

The result is an industry that increasingly resembles an industrial system rather than a traditional software ecosystem.

Semiconductor companies supply the accelerators. Hardware vendors assemble AI servers. Networking firms provide the fabric that connects thousands of GPUs into a single cluster. Utilities and power providers supply the energy required to operate these systems.

What emerges is not simply a new category of software.

It is the construction of a new computational substrate for intelligence.

The Scale of the Investment

The magnitude of capital flowing into AI infrastructure is historically unusual even by technology standards.

The largest hyperscalers are now investing at levels rarely seen in the software industry. Combined capital expenditures from companies such as Amazon, Microsoft, Alphabet, and Meta are expected to exceed hundreds of billions of dollars annually as they expand their AI data center capacity.

Recent estimates suggest that hyperscaler capital spending could approach $600 billion per year within the next few years as AI infrastructure continues to scale.

This spending includes:

- GPU accelerators and custom AI chips

- high-density data center facilities

- high-bandwidth networking infrastructure

- liquid cooling systems for dense compute clusters

- large-scale electrical and power infrastructure

Hyperscaler infrastructure spending is scaling far faster than the revenue currently generated by AI applications.

The capital intensity of this investment marks a significant departure from traditional software economics.

Traditional SaaS companies typically spend 3–6% of revenue on capital expenditures, while early cloud providers historically operated in the 10–15% range.

Today, several AI leaders are allocating far larger shares of revenue toward infrastructure.

| Company | Revenue | CapEx | CapEx % of Revenue |

|---|---|---|---|

| Amazon | ~$575B | ~$75B | ~13% |

| Microsoft | ~$245B | ~$55B | ~22% |

| Alphabet | ~$307B | ~$52B | ~17% |

| Meta | ~$134B | ~$37B | ~27% |

| Oracle | ~$53B | ~$9B | ~17% |

Some companies building AI systems are now spending 20–30% of revenue on capital expenditures, levels more typical of infrastructure industries than software companies.

Artificial intelligence is turning computing into a capital-intensive system.

Why Monetization Lags

While infrastructure investment has accelerated rapidly, monetization has progressed more gradually.

Many of today’s AI products are priced similarly to traditional software subscriptions. Coding assistants, productivity copilots, and consumer AI tools often generate revenue measured in tens of dollars per user per month. Even enterprise AI offerings frequently follow familiar SaaS pricing models based on seats, subscriptions, or API usage.

These pricing structures do not yet reflect the full cost of the infrastructure required to operate large AI systems.

Running modern AI models requires substantial compute resources for every inference. When millions of users interact with these systems simultaneously, the infrastructure costs become significant.

As a result, many companies are effectively subsidizing early AI usage while they search for sustainable business models.

The applications are real and increasingly useful.

But the economic model for capturing their value is still evolving.

The Timing Mismatch

This creates a timing mismatch between infrastructure investment and application revenue.

Infrastructure must be built before the applications that depend on it can emerge. AI systems require large-scale compute clusters, reliable networking, and substantial energy capacity long before those systems are fully utilized.

In economic terms, capital is being deployed ahead of demand.

This pattern is not unusual in infrastructure cycles. Railroads were built before the regions they connected were fully developed. Fiber optic networks were deployed years before internet traffic grew large enough to justify the investment.

Artificial intelligence appears to be following a similar trajectory.

Companies are building the computational capacity required for an AI-driven economy before the full range of AI applications has been discovered.

From Software Economics to Infrastructure Economics

One of the most significant shifts in the AI era is the changing economic structure of the technology industry.

For decades, the most valuable technology companies operated under software economics. Once software was developed, it could be distributed globally with near-zero marginal cost.

Artificial intelligence introduces a different model.

Running AI systems requires continuous compute, specialized hardware, and large amounts of energy. The marginal cost of generating intelligence does not approach zero in the same way traditional software does.

Instead, value increasingly flows toward the companies that control the infrastructure required to produce and operate machine intelligence.

Semiconductor manufacturers, cloud providers, data center operators, networking firms, and energy suppliers all become central participants in the AI economy.

The technology industry begins to resemble an infrastructure industry.

When Monetization Catches Up

Over time, the gap between AI CapEx and AI monetization will likely narrow.

As AI systems move deeper into enterprise workflows, industrial processes, and operational decision-making, the economic value generated by each AI interaction could increase significantly.

Instead of assisting with narrow productivity tasks, AI systems may begin to automate complex knowledge work, manage logistics networks, assist in engineering design, or support financial decision-making.

When AI becomes embedded in the core operations of businesses, the willingness to pay for these systems could increase dramatically.

At that point, the infrastructure currently being built may begin to generate far greater economic returns.

What appears today as excessive spending may ultimately prove to be foundational investment.

The Real Structure of the AI Economy

The early AI economy is often described as a race to build the best models or the most compelling applications.

But at a deeper level, the defining feature of this phase is the massive capital investment required to build the machines that intelligence runs on.

Compute clusters, data centers, networking fabrics, and power infrastructure form the physical foundation of the AI era.

Applications will eventually determine how that intelligence is used.

But the economic structure of the industry is being shaped first by the infrastructure required to produce it.

In the early stages of the AI economy, the most important question is not which application wins.

It is who builds the machines that intelligence runs on.